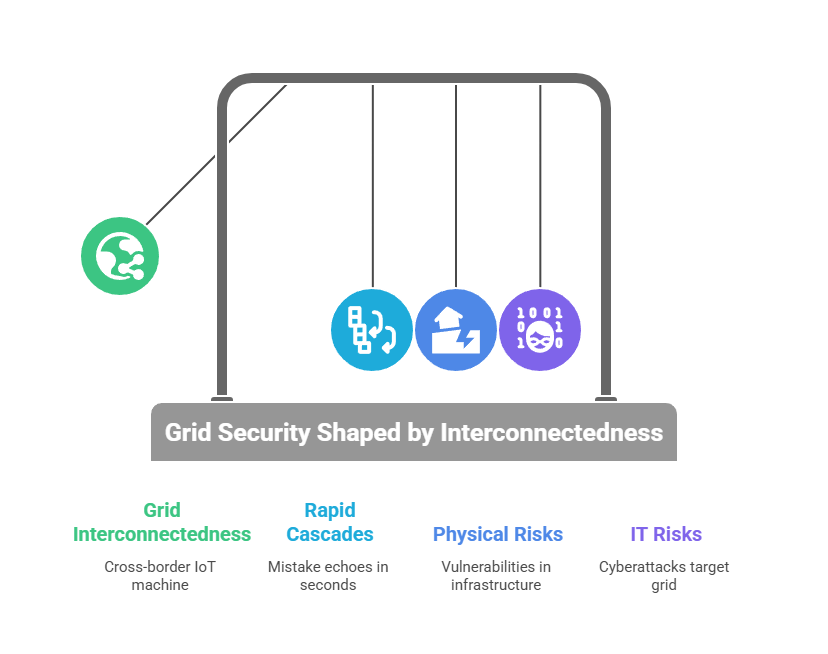

Europe’s grid isn’t one company—it’s a 50 Hz, cross-border IoT machine run by many TSOs (transmission operators) and thousands of DSOs (distribution operators) under ENTSO-E’s umbrella. That shapes security: a mistake (or attack) in one country can echo into another in seconds. This article breaks the tech into plain language, shows the physical and IT risks (including smart/“AMS” meters), and puts the recent Iberian blackout in context—without hype.

First, a map of the system—no acronyms left unexplained

- Control rooms: Operators run SCADA software to watch the grid and send commands.

- Substations: Fields of transformers and IEDs (intelligent relays) that open/close breakers in milliseconds.

- Wires & interconnectors: 220–400 kV AC lines and long HVDC cables tie countries together; power automatically flows where physics allows.

- Timing system: GPS/PTP clocks keep measurements aligned (vital for fast protection).

- Edge devices: Smart meters (AMI / AMS in Nordic parlance), solar/battery inverters, EV chargers—all talk to utility “head-end” platforms.

Why this matters for security: grid traffic is time-critical. You can’t slap heavy inspection on every packet if it adds delay that trips a feeder. Controls must be reliable and precise.

The European protocols—what they do and what “secure” really means

- IEC-104 (Europe’s SCADA workhorse): moves telemetry and control between control rooms and remote substations over TCP (port 2404). Base IEC-104 has no built-in crypto; in 2025 that’s unacceptable. Utilities should run it inside TLS (mutual certificates) or IPSec, restrict who can be a “master,” and alarm on unusual Operate commands or new masters appearing on 2404/TCP. (Industroyer-class malware abuses exactly this layer.)

- IEC 61850 (inside the substation):

- MMS: configuration/measurements over TCP → protect with TLS + roles (“who may Operate”).

- GOOSE: ultra-fast Layer-2 alarms/trips → add integrity/auth if the vendor supports it; otherwise isolate at L2/VLAN and monitor for new subscribers.

- Sampled Values: digitized currents/voltages on the process bus → keep jitter near zero; never put inline boxes there unless engineered for it.

- ICCP/TASE.2 (control-room ↔ control-room): lets TSOs/DSOs exchange datasets (flows, schedules). Best practice: TLS with cert pinning, and only the whitelisted points pass through an OT-DMZ (a security buffer between IT and operations).

- Smart-metering (DLMS/COSEM): the European meter protocol family supports modern crypto, but security depends on implementation and key management. Poor device hardening, default keys, or weak head-end segregation remain common failure modes.

- Timing (GNSS/PTP): if time is wrong, phasor data and some protections go wrong. Harden GPS (redundant antennas/receivers, spoofing detection) and engineer PTP with boundary clocks and alarms on time skew.

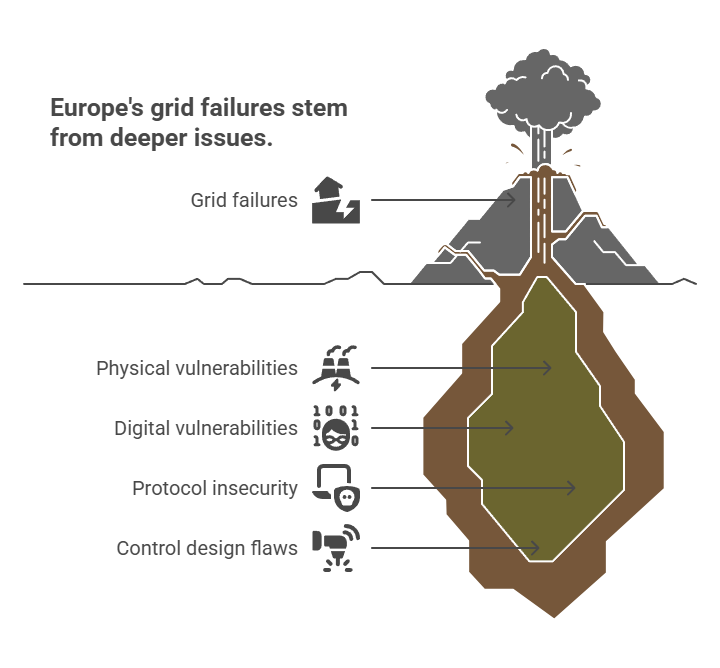

The risks – it’s not only cyber security

Physical risks (and why they cascade digitally)

- Fires, storms, tower strikes can trip interconnectors; automatic protections shed load to save the system. That’s what happened on 24 July 2021, when a French transmission incident separated Iberia from Continental Europe; the proximate cause was a wildfire near lines, not hacking.

- Substation sabotage/theft: cutting fiber, damaging transformers, or tampering with control cabinets can force operators into degraded, manual modes—exactly when cyber hygiene gets harder.

- Timing fragility: GPS antennas are small, exposed, and easy to jam/spoof; loss of timing pollutes many measurements at once.

- Substations and trafos that fules up whole neighborhoods are secured by one and the same key, which people often name Masterkey or General

Cyber/IT risks (what attackers actually target)

- Unsecured IEC-104 over “trusted” MPLS → spoofed Operate commands to RTUs. Fix: TLS/IPSec, one master per RTU, and strict allow-lists.

- Inside-substation mischief (IEC 61850): rogue GOOSE subscribers, SV dataset changes, or MMS writes to relay settings. Fix: L2 isolation, vendor auth features where timing permits, and passive monitoring for subscriber/dataset changes.

- Control-room interties (ICCP): over-broad datasets or unpinned certificates between organizations. Fix: DMZ termination, pinned certs, named bilateral tables.

- Remote access sprawl: vendor TeamViewer/VPNs directly into substations. Fix: brokered access via OT-DMZ jump hosts (PAM), MFA, time-boxed approvals, full session recording.

- Timing attacks: GPS spoofing or unauthenticated PTP skew. Fix: dual GNSS, engineered PTP domains, holdover oscillators, and alarms.

- Supply-chain gaps: unsigned firmware, opaque components. Fix: demand SBOMs and signed updates in contracts (NIS2/NCCS push in this direction).

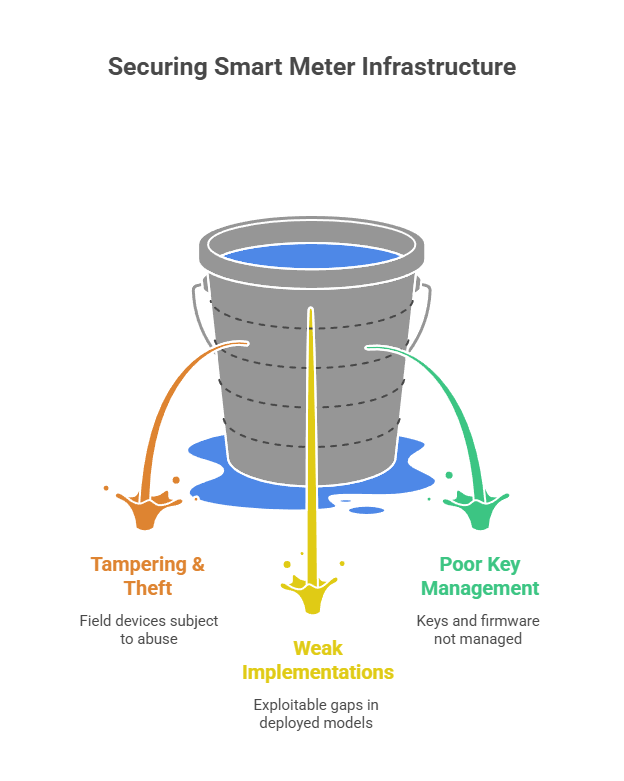

Smart meters / AMS: what’s real—and what isn’t

Smart meters don’t “run the grid,” but they touch billing, disconnection, and load control—and there are millions of them.

- Documented problems:

- Tampering & theft: Malta uncovered thousands of altered meters a decade ago. That was criminal fraud, not nation-state hacking—but it proves field devices get abused.

- Weak implementations: Researchers have shown exploitable gaps in some Spain-deployed models (older generations) and recurring DLMS/COSEM weaknesses when keys or firmware aren’t managed well.

- What defenders do (and what we should ask vendors for):

- Crypto done right: DLMS/COSEM Security Suite 1/2 with per-meter keys, secure boot, and signed firmware.

- Head-end segregation: keep AMI/AMS platforms on their own networks; expose only one-way data into analytics.

- Rate-limited remote disconnect with approvals and alarms; treat bulk disconnect like operating a breaker.

- Field hardening: seals help, but pair them with tamper sensors and exception analytics (“sudden loss of load across a neighborhood”).

- Lifecycle: rotate keys, revoke lost devices, and patch like you mean it—smart meters are computers with seals.

- Detection: watch for waves of config changes, mass reconnect/disconnect, or abnormal DLMS command sequences.

AMSes are not a single point of failure, but I’ve seen how badly they’re managed in Norway. Ways to “unlock” the port are not secure enough, the number of companies that manage those AMSes are pointing out to the fact that even when you have standards, it’s really hard to keep them across hundreds of companies.

Case study: the 2025 Iberian blackout—could it have been an attack?

On 28 April 2025, Spain (and parts of Portugal) suffered a huge outage. Official statements since May–June 2025 say investigators found no evidence of a cyberattack on generation control centers or the national operator; analysis pointed to voltage surges and planning/operational failures that cascaded. In short: high-impact, non-cyber—though the door was left open to continue checking other layers.

Why we still care in a security article: the physics didn’t wait. A few local faults cascaded across a continent-scale machine, just like they would if someone spoofed controls or corrupted timing. This time it wasn’t well coordinated attack, but the grid health shows that if that would be the case, the outcome would be tragic. So the lesson stands:

- Design so that one failure doesn’t cascade (good protection settings, sane automation).

- Treat every cross-border conduit (IEC-104, ICCP) as hostile by default: encrypt, authenticate, and monitor.

- Keep forensics-grade visibility (protocol-aware taps) so you can rule in/out cyber quickly and credibly.

You can also read a bit more on the Iberian blackout here : Lessons from the Iberian Blackout: The Role of V2G

The EU rulebook—what it actually asks for

Think of Europe’s security framework as three layers that fit together. NIS2 is the horizontal layer: it treats energy companies as “essential entities” and tells them to run a real cyber-risk program—not just a checklist. In practice that means doing regular risk assessments, controlling suppliers, and reporting serious incidents on a clock: an early warning within 24 hours, an incident notification within 72 hours, and a final report within one month. NIS2 is governance rather than a wiring diagram; it tells you what outcomes you must achieve and how quickly you must talk to your national CSIRT/authority when things go wrong.

Beneath that sits the sector-specific piece: the Network Code on Cybersecurity (NCCS). This is binding electricity law that standardises how TSOs and DSOs manage cyber risk across cross-border power flows. It sets common minimum requirements, a recurring EU-wide risk-assessment process, and shared rules for monitoring, reporting, and crisis management between operators and regional coordination bodies. Where NIS2 says “have a program and report fast,” NCCS says “here’s how the electricity community should organise itself for cross-border operations.”

Finally, the CER Directive focuses on the physical side: guards, fences, redundancy, disaster planning, and the ability of “critical entities” to keep services running despite fires, floods, or sabotage. CER matters for cyber too, because the EU now treats physical and digital resilience as two halves of the same obligation; a cyberattack during a storm is still your problem to handle coherently.

A practical, Europe-ready plan (that covers physics and IT)

Start by segmenting the network the way the grid actually works, not the way the org chart looks. Put a controlled buffer—the OT-DMZ—between enterprise IT and the control-centre core. Inside each substation, treat the station bus (where operator messages and fast events live) separately from the process bus (where digitised currents/voltages flow and microseconds matter). Keep smart-meter head-ends, DER/solar control, and EV-charging platforms on their own “islands,” with only the minimum pathways back to operations. This is what stops a phishing email from turning into a breaker operation.

When those zones are in place, make identity and encryption the default. The European workhorse protocols—IEC-104 (for telemetry/controls), MMS (the 61850 client/server service in substations), and ICCP/TASE.2 (between control rooms)—should run inside TLS with mutual certificates or IPSec on untrusted links. For DNP3, switch on Secure Authentication. Enforce “one master per RTU,” pin certificates, and filter by the exact addresses and functions you intend to use; this is how you prevent spoofed Operate commands on port 2404/TCP.

Treat remote access as a brokered privilege, not a tunnel. Vendors and engineers land first in the OT-DMZ on a privileged access jump host; you require MFA, time-limited approvals, and full session recording. No direct vendor VPNs into substations—ever.

Inside the substation, practice IEC 61850 hygiene. Give each bay its own VLAN; enable IGMP snooping so multicast traffic (like GOOSE, the millisecond trip/interlock messages) doesn’t flood the switch; turn on storm control; and use the vendor’s integrity/auth features for GOOSE/Sampled-Values where timing budgets allow. Lock down the SCL configuration files and device accounts so that only authorised engineers can push changes—and only during planned windows.

Remember that time is a critical asset. PMUs and some protection schemes depend on precise UTC. Use dual GNSS receivers, engineered PTP (IEEE 1588) with boundary/transparent clocks, and holdover oscillators so clocks stay sane during a satellite hiccup. Alarm on skew and treat time errors like you would a voltage excursion.

On the metering side, smart meters (AMI/“AMS”) are just small computers with seals. Defend them like it. Demand per-meter cryptographic keys, signed firmware and secure boot from vendors; keep the AMI head-end on its own network; rate-limit remote disconnect/reconnect; and watch analytics for unusual waves of commands or configuration changes. European standardisation work under CEN-CENELEC gives you a baseline to reference in contracts and technical specs.

For detection, watch behaviours, not just malware names. In practice that means alarms when a new master appears on 2404/TCP or when an Operate command arrives outside a work window (IEC-104); when a new GOOSE subscriber shows up or a Sampled-Values dataset/rate changes (IEC 61850); when an ICCP dataset or certificate changes on an inter-utility link; or when you see bursts of DLMS/COSEM disconnects and config writes in AMI that don’t match a planned job. Those are the moves that change the physics, and they’re the ones worth chasing.

And then rehearse the bad day. Sit operators, protection engineers and the SOC around one table and walk through two drills: “a rogue master operates a bay” and “GPS spoofing causes timing drift.” Decide who pulls which lever, what gets blocked at which conduit, what gets logged, and who files the 24-hour/72-hour/one-month reports under NIS2. Write it down; run it again next quarter.

Last, put the supply-chain obligations in writing. Ask for SBOMs, signed updates, vulnerability-disclosure SLAs, and insist that any remote vendor work uses your PAM inside your OT-DMZ. NIS2 and the NCCS give you the policy backing to make those requirements stick in Europe’s electricity sector.

Bottom line

Europe’s grid is both physical and digital, and it fails in both ways. Fires can split a synchronous area; sloppy remote access can do the same. Speak the system’s languages (IEC-104, 61850, ICCP), secure them the way they work, and design your controls so that physics stays stable even when IT is under stress. That’s how you keep the lights on—whether the next big event is a storm, a misoperation, or an adversary